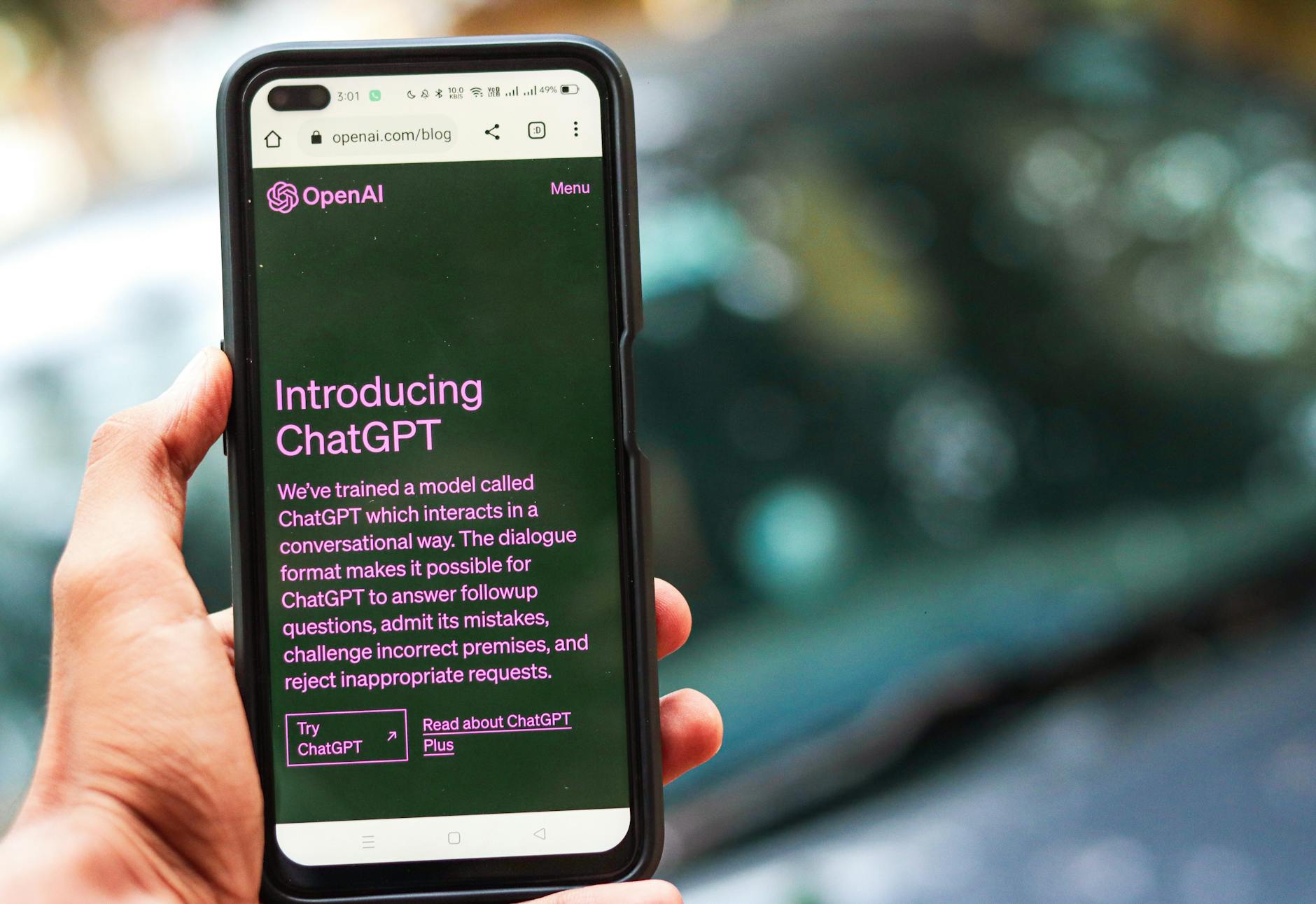

Another cycle. It feels like we just finished digesting the last major AI model update, finding its quirks, integrating it into our workflows, and then, almost before the digital dust settled, the whispers begin. The next iteration. The next leap. This time, it’s the inevitable comparison: GPT-4o, fresh in its capabilities, against the looming shadow of GPT-5. And, as always, the core question for anyone who actually uses these tools day-in, day-out isn’t about the marketing sizzle. It’s about the tangible, practical value. And, perhaps most acutely, the token cost.

We’ve seen this script before. Each new model arrives with promises of unprecedented intelligence, speed, and efficiency. GPT-4o, when it landed, certainly delivered a punch. It wasn’t just faster; it was genuinely multimodal in a way that felt more cohesive, less like disparate features bolted together. The ability to fluidly process audio, vision, and text, to understand nuances in spoken commands, to even detect emotion in a voice – these weren’t just incremental gains. For many, it felt like a significant step toward a truly conversational AI, something beyond the text-in, text-out paradigm we’d grown accustomed to.

And then there was the cost. Crucially, GPT-4o came in at a lower price point than GPT-4 Turbo for many operations, offering better performance for less. This was a win, a rare moment where progress didn’t automatically equate to a steeper bill. For developers building on the API, for content creators running multiple queries, for anyone managing a budget, this was a relief. It allowed for more experimentation, more usage, without constantly watching the meter tick up. It made the cutting edge feel a bit more accessible.

The Anticipation of GPT-5: What Are We Really Waiting For?

Now, the conversation shifts to GPT-5. Details are, naturally, scarce and often speculative. But the general expectation is clear: a further refinement of everything GPT-4o started, and then some. We anticipate models that are not just marginally better, but fundamentally more capable in areas like complex reasoning, long-context understanding, and perhaps even a reduction in the persistent problem of ‘hallucinations’—that polite term for when the AI just makes things up. Imagine an AI that can truly grasp the subtle implications of a multi-page legal document, or synthesize research from dozens of disparate sources with fewer logical leaps. That’s the dream, isn’t it?

The promise of GPT-5 isn’t just about faster processing; it’s about deeper comprehension. It’s about a model that can maintain coherence over even longer conversations, understanding historical context without needing constant re-prompts. It’s about more sophisticated problem-solving, moving beyond pattern matching to something closer to genuine inference. For those pushing the boundaries of what AI can do—in scientific research, intricate software development, or highly specialized content generation—these improvements could be transformative.

But with every leap in capability, there’s an unspoken understanding: the cost will likely climb. This isn’t a cynical observation; it’s the reality of advanced computing. Training these models is astronomically expensive. Running them at scale demands immense computational resources. So, the question isn’t *if* GPT-5 will be more expensive, but *by how much*, and whether that premium delivers a proportional return on investment.

The Token Cost Conundrum: A Practical Evaluation

For many of us, the token cost isn’t an abstract number. It’s a line item on a budget, a variable in our project planning, a factor that dictates how freely we can experiment or how extensively we can deploy. When GPT-4o offered more for less, it felt like a breath of fresh air. A move towards greater efficiency. If GPT-5 reverses that trend significantly, it forces a hard look at its necessity.

Consider a scenario: a content agency uses an AI model to assist with drafting blog posts, social media updates, and ad copy. With GPT-4o, they might be able to generate several variations, refine them, and even get initial image suggestions without breaking the bank. If GPT-5 arrives at double the token cost, do the incremental gains in nuance or creativity justify that expense? Does it halve their output capacity or force them to be far more selective in their usage?

The decision to upgrade isn’t just about raw power; it’s about the marginal utility. Are the improvements in GPT-5 going to solve problems that GPT-4o simply couldn’t touch? Or are they going to offer a 10% improvement for a 50% price hike? That’s the kind of calculation that separates the hype from the practical application.

The real question isn’t ‘Is GPT-5 better?’ It’s ‘Is GPT-5 better *enough* to justify its likely higher token cost for *my specific use case*?’

For a researcher needing to sift through terabytes of data to find a needle in a haystack, that incremental improvement in reasoning might be priceless. For a casual user looking for better summarization of emails, GPT-4o might already be overkill, and GPT-5 an unnecessary luxury.

E-E-A-T and the AI Model: Still a Human Endeavor

This discussion naturally leads to E-E-A-T: Expertise, Experience, Authoritativeness, and Trustworthiness. We’ve been talking about this framework for years in the context of content quality and search engine visibility. Now, with AI models becoming increasingly sophisticated, it’s worth asking how they fit into this picture.

Can a GPT-5 model, however advanced, truly embody E-E-A-T? Not directly. A model can provide factual information, synthesize data, and even mimic a specific tone, contributing to the ‘Expertise’ component by assembling information. It can help structure arguments that appear ‘Authoritative’ if the underlying data is sound and presented logically. But ‘Experience’ and ‘Trustworthiness’ remain fundamentally human attributes.

A GPT-5 might be able to write an article about the nuances of quantum physics with astonishing accuracy, drawing on vast datasets. It might even simulate a personal anecdote. But it hasn’t *lived* the experience of struggling through a complex experiment, of having a hypothesis fail, of the quiet satisfaction of a breakthrough. That lived-in quality, that subtle realism that resonates with a reader, still comes from a human author. The AI is a tool, a powerful one, but a tool nonetheless.

We’ve seen the cycles of content creation, the push for speed, the race to publish. The danger, always, is that in the pursuit of efficiency, we lose the very human element that builds trust. A more capable AI like GPT-5 could, in theory, help *humans* produce content that better aligns with E-E-A-T by providing more accurate data, better structured arguments, and deeper insights. But the ultimate responsibility for verifying facts, for infusing genuine experience, and for building trust, still rests with the human editor, writer, or subject matter expert.

Who Benefits Most from the Bleeding Edge?

The upgrade path is rarely linear for everyone. For some, the jump from GPT-3.5 to GPT-4 was a game-changer. For others, GPT-4o’s multimodal capabilities opened up entirely new applications. GPT-5 will undoubtedly be a must-have for certain innovators and industries.

- AI Researchers and Developers: Those pushing the boundaries of AI capabilities, building complex applications, or exploring novel interactions will likely find the enhanced reasoning and deeper understanding of GPT-5 indispensable. Their work often demands the absolute peak of current technology.

- High-Volume Content Factories: Organizations that rely heavily on automated content generation, where marginal gains in quality or reduction in post-editing time can translate to significant cost savings at scale, might find the upgrade worthwhile, despite the token cost.

- Specialized Industries: Fields requiring extreme precision, like legal document analysis, highly technical writing, or complex scientific data interpretation, might see GPT-5 as a necessary investment if it significantly reduces errors or improves the accuracy of critical outputs.

But for the vast majority of users—the small businesses, the individual content creators, the everyday productivity hackers—the calculus is different. Is the current model, GPT-4o, already meeting 90% of your needs? Is that last 10% worth a potentially substantial increase in operational cost? Sometimes, the smartest move isn’t to chase the newest, shiniest object, but to fully master the capabilities of the tool you already have.

The Enduring Question of Diminishing Returns

We’ve witnessed the rapid acceleration of AI capabilities. The leaps from one generation to the next have been astonishing. Yet, as models become more powerful, the perceived jump in utility for the average user often starts to feel less dramatic. The difference between a passable text generator and a truly intelligent one is vast. The difference between an incredibly intelligent one and an even more incredibly intelligent one might be harder to discern in daily tasks. It’s like upgrading your internet speed from 500 Mbps to 1 Gbps. Sure, it’s faster, but unless you’re constantly downloading massive files, you might not feel the day-to-day impact as much as the jump from 50 Mbps to 500 Mbps.

The constant churn of new models, the endless cycle of announcements and upgrades, can be exhausting. There’s a quiet pressure to always be on the bleeding edge, to use the ‘best’ tool available. But experience teaches us that ‘best’ is subjective. It’s defined by your specific needs, your budget, and the actual problems you’re trying to solve. The question of GPT-4o versus GPT-5 isn’t just about technical specifications; it’s a deeper inquiry into value, utility, and the subtle art of knowing when enough is truly enough. Sometimes, the most powerful tool is the one you understand best, and can afford to use freely, without constantly watching the token meter tick away. The cold coffee sitting beside my keyboard, still waiting for me to finish this thought, reminds me that even the smartest AI can’t replace focused human attention to detail.